Images mixed by Tetyana Lokot.

Ukrainian President Petro Poroshenko has publicly joined calls for a dedicated Ukrainian Facebook office. His support for the cause comes after numerous users in Ukraine—and in Russia—complained of their posts and accounts being taken down or blocked, despite the fact that they may not have violated Facebook's terms of service. Users in both countries claim these takedowns are politically motivated and the posts are being reported for violations by masses of “Kremlin supporters.”

Ukrainians Campaign for Local Office

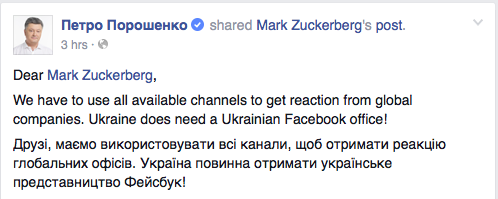

Poroshenko addressed Facebook's founder Mark Zuckerberg in a post on the website, after other Ukrainian users commented on Zuckerberg's announcement of a townhall Q&A in the company's headquarters on May 14.

Screenshot of President Poroshenko's Facebook post addressing Zuckerberg.

We have to use all available channels to get reaction from global companies. Ukraine must get a Ukrainian Facebook office!

Poroshenko's statement was spurred by a popular Ukrainian comment on Zuckerberg's post. The user reported that posts like this photo of a Ukrainian soldier's daughter were allegedly being flagged as “nudity.” The commenter also linked to a petition suggesting Facebook should pay more attention to its Ukrainian segment and consider opening a regional office.

Lately, the blocking of popular and active users in the Ukrainian Facebook Community has become a common and regular practice.

Our requests for Facebook support to address these issues, our requests for explanations of the valid reasons for blocking or deactivating these accounts are often ignored, or – if considered – considered for a very long time (up to 10 months) and with no real resolution.

Russians Blame the Kremlin Bots

Russian Facebook users have also complained of unfair blocking, with RuNet guru Anton Nossik blaming the “Kremlin bots” for content takedowns and blocks on his own account and others. Nossik's examples from his own page did contain nudity (photos from a Biennale performance) and execution-style images (a screenshot from a Russian music video), so they may have indeed violated Facebook's rules, but he believes the posts were flagged as part of a systematic campaign by pro-Kremlin forces to silence dissenting voices of those who somehow end up on the “enemies of the people” roster.

Технология блокировки проста как мычание. В адрес Abuse Team англоязычной социальной сети направляются сотни и тысячи жалоб от ботов на некий аккаунт, предположительно нарушающий пользовательское соглашение этой самой сети. […] Но, видя такое количество жалоб единовременно, не читающий по-русски и не понимающий ситуации англоязычный модератор соцсети приходит к выводу, что пост действительно нарушает какие-то community guidelines — откуда ему знать про бюджеты на борьбу с национал-предателями в Интернете?!

The blocking technology is very simple. The Abuse Team of the English-language network gets hundreds and thousands of complaints from bots about an account which allegedly violates the terms of service of this network. […] But when they see such a volume of complaints simultaneously, an English-speaking website moderator who does not read Russian and does not understand the situation concludes that the post really does violate some community guidelines—how would they know about the budgets dispensed to fund the fight against “national traitors” online?!

Facebook has previously dismissed the claims that the number of complaints on a piece of content increases the likelihood the post might be taken down. In an October 2014 interview with RuNet Echo, Richard Allan, Facebook's VP for Public Policy in Europe, Middle East, and Africa, stressed that the company does not see a large volume of reports as a “good indicator” of whether or not a piece of content stands in violation of the site's policies.

We've been running the service for 10 years, and there are ways in which people try and game our reporting system. They try and use the system to get to other people on the site. One of the obvious ways people tried to use the system in the early days is to send in multiple reports in the hope that those could take down some content they didn't like. So we've very quickly learned that a lot of reports is not a very good indicator of whether content is bad.

Nossik's post comes on the heels of the swift blocking and unblocking of a May 6 post by Russian journalist Sergei Parkhomenko criticizing a leaked Russian report that assigned the blame for the MH17 tragedy to Ukraine. Previously, Facebook also deleted a post by LGBT activist Lena Klimova, who posted images of her online abusers and juxtaposed them with their hateful comments.

Commenting on Sergei Parkhomenko's post deletion, Facebook spokeswoman Sally Aldous told RFE/RL that the post was removed “mistakenly,” and that the FB team “deals with thousands of reports each day,” so mistakes do occasionally happen. While Facebook did not specify what specifically triggered the removal of the post, Parkhomenko does not believe his post violated any community standards and that the takedown was the result of pro-Russian online activists spamming Facebook with complaints.

The Same Rules for Everyone?

As early as the summer of 2014 Ukrainians had complained of what they said is politically-motivated blocking of prominent Facebook users, when several well-known pro-Ukrainian activists who were critical of the Kremlin and the pro-Russian rebels in Eastern Ukraine had their Facebook pages blocked. At the time Ukrainians said the pages were blocked because of complaints by “organized pro-Putin groups of Russian users.” Ukrainian users also implied the Ukrainian segment was somehow mismanaged by the “biased staff” in the Russian office, which Facebook denied, explaining that both the Russian and the Ukrainian segments were managed by an international team from their Dublin headquarters.

While Ukraine is worried about impartial platform management, Russian users have more to fear from Facebook's relations with their own government. In recent years, both Facebook and the U.S.-based social-networking site Twitter have removed posts and accounts critical of Russian authorities based on claims by Russian state media watchdog Roskomnadzor that they violate domestic laws.

In December of 2014, Facebook blocked the event page for a protest rally in support of Putin critic and opposition figure Alexey Navalny for users in Russia, ostensibly at the behest of the Kremlin. After a major outcry, Facebook said they would not block other protest pages, but did not comment on the initial block.

As platforms like Facebook and Twitter become more important in the struggle for free speech and alternative public spaces around the world, it is more important than ever to recognize that these websites do not currently have tools in place to mitigate government pushback on activists and independent journalists, like “political takedowns” through mass complaints or the misuse of the companies’ own rules (like what constitutes “hate speech”or “impersonation”) to ban unwanted content.

Facebook's Allan conceded that “people will try and work around systems,” whether through impersonation, reporting, or by other means.

We're not going to say never, that people aren't going to find new ways to game the system, but our philosophy is if that's happening, we will try and identify those problems, and we'll try and change our systems to make sure that they are protecting against any kind of abuse.

While this philosophy sounds reasonable, the fact remains that free speech in Ukraine, Russia, and other countries where traditional media systems are corrupted, depends more and more on corporate platforms like Facebook and Twitter. Recognizing the instances of state actors trying to “game the system,” guarding the freedom of independent voices, listening to the grievances of individuals and communities, and addressing their concerns should be at the top of the agenda for social media companies if they want to retain the trust—and screen time—of their users.

7 comments

мене 05.13 2015р. забанили русо-фашисти, за те що я написав листа Цукербергеру, на 30 днів! дожився, в 69 років дістав від москалюг клятих.

Thank you for providing a example of a hate speech, now I understand why FB and Twitter remove posts like that.

Do you read Ukrainian?