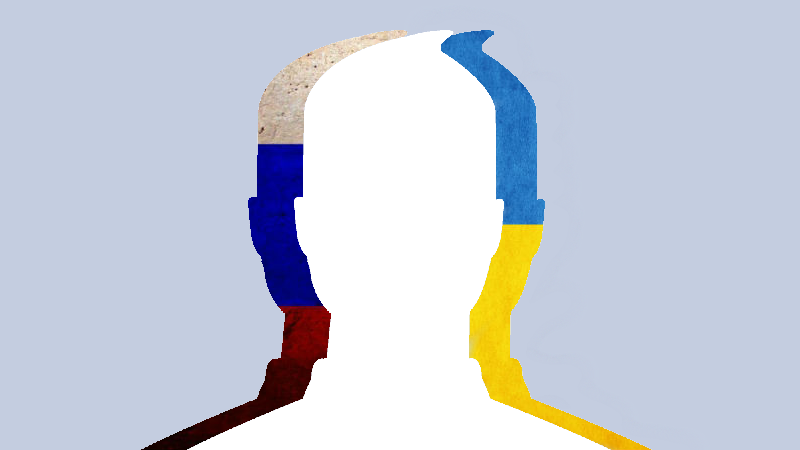

Images mixed by Tetyana Lokot.

In the social media war that has played out alongside the conflict on the ground in Ukraine, users on all sides resort to desperate measures: numerous complaints, reporting content, and mass commenting are all part of the arsenal. Disciplining the abusers is sometimes next to impossible, unless they violate a platform's terms of service.

In August, RuNet Echo reported on an outcry among Ukrainian Facebook users who accused Facebook of taking down pages of Ukrainian activists based on mass complaints from what they said were pro-Russian “organized groups.” In an open letter to Facebook's Mark Zuckerberg, Ukrainians also claimed the people managing the Ukrainian Facebook segment were biased against the pro-Ukrainian activists.

In the past, users ranging from political activists in the Arab region to LGBT rights defenders in the United states have made accusations of a similar nature. During the war in Gaza this past summer, Global Voices highlighted what appeared to be an uneven application of Facebook's Community Standards over content from Israeli and Palestinian users.

We asked Richard Allan, Facebook's VP for Public Policy in Europe, Middle East, and Africa, to shed some light on the content policies in Facebook's Ukrainian and Russian segments. He also talked about other attempts to “game the system” of content takedowns at Facebook, and the difficulties of dealing with a multi-lingual, global audience.

The content-review process at Facebook involves several layers of personnel. First, there is a policy team whose full-time job is to review Facebook's Community Standards and Terms of Use in conjunction with how people actually use the platform. Allan says this team is “a group of people from around the world, of mixed nationalities, deciding on those global standards.” The Community Standards are not set in stone, but rather refined over time, and the policy team deals with any exceptional cases sent to them from anywhere in the company, whenever there are concerns that the policies are being applied incorrectly or need revisions. A recent example is the site's move to augment its real-name policy — a move that users ranging from activists in repressive environments to drag performers have advocated for many years.

Facebook's other content-review group is also multi-national, with offices all over the world, including locations in Ireland, the US, and India. Although some reviewers might have an area of expertise, such as hate speech or bullying, the reviews are not grouped by country, but rather by language. Facebook employs native speakers of a huge variety of different languages to fill this role within the global review system.

[For] example, we have Arabic language support to deal with Arabic-language content, and it's not like we have a Facebook Egypt team and a Facebook Lebanon team. That's not the structure. It's a global structure with additional language support, not a specific country support option.

In the particular case of Ukraine, it is important to note that Facebook does not have a physical office in either Ukraine or Russia. All user services for the two countries are managed by Facebook Ireland Limited, a 500-strong international team based in Dublin. Allan says that Facebook's Russian-speaking representative, Yekaterina Skorobogatova, whose name was mentioned in complaints from Ukrainians as a possible biased party, has nothing to do with content review.

Her job has got nothing to do with content review stuff. People may have picked up on her because she has made presentations externally, but her job is to build partnerships with mobile operators. And she has worked building partnerships with third parties who want to work with Facebook in Russia because she is a native Russian speaker. But her role has got nothing at all to do with Facebook content or Facebook users. […] If we're speaking to a Russian regulator, we might speak to her to help us understand the language issues, because I am not a Russian speaker. But again, she has no responsibility for user content or decisions, which are entirely in the hands of other teams in the company.

Once someone reports a piece of content to Facebook, Allan says, it undergoes a review. If staff determine that the content breaches Facebook's rules, it is removed. Incitement of violence and hate speech are two of the most common types of speech removed for violating the website's Community Standards. This process is known to cause problems for page administrators, whose pages are removed simply because another individual posted something on their page that violates these standards.

Importantly, Allan acknowledges that content sometimes gets taken down in error, mostly due to the sheer scale of Facebook's operations and the fact that the review process is in human hands.

Sometimes we make mistakes. When we are reviewing significant amounts of content, it's possible that some of the decisions we've made are incorrect. And cumulatively, if there are enough decisions that the content on the page is bad, whether correct or incorrect, that can lead to the page going down.[…] We're open to say we sometimes make mistakes, and at the scale at which we operate, that can sometimes be an issue.

Facebook encourages users to appeal, if they believe their page has been take down in error. “We do put pages back up again if we think we've taken them down incorrectly,” Allan stresses.

One recent case of what appears to be politically charged reporting is that of a page called “Груз 200 (Cargo 200),” a community that tracks Russian servicemen killed in action in Ukraine. The group currently has more than 25,000 followers. While members of the community often post sensitive content, such as graphic images from the conflict, the group's goal is ostensibly within Facebook's guidelines. Opponents of the group reported it, and the group was taken down on September 23, causing many complaints to Facebook and even an online petition asking to reinstate the page. In less than 24 hours, Facebook reacted to the complaints by restoring the page.

Many Facebook users believe that the more people report a particular piece of content, the more likely it is to be taken down. Although it is through this system that a piece of content is initially flagged and reviewed by Facebook personnel, Allan explains that the company does not see a large volume of reports as a “good indicator” of whether or not a piece of content stands in violation of the site's policies.

We've been running the service for 10 years, and there are ways in which people try and game our reporting system. They try and use the system to get to other people on the site. One of the obvious ways people tried to use the system in the early days is to send in multiple reports in the hope that those could take down some content they didn't like. So we've very quickly learned that a lot of reports is not a very good indicator of whether content is bad.

Once the reports start coming in, Facebook reviews the content against its standards, and makes a decision early on about whether the content is in violation of the norms.

After a certain number of reports, we are confident that the content is fine, so when the new reports come in, the people who receive them understand that those are reports on content that's already been reviewed multiple times, and therefore we can discount any additional reports on it.

The tactic on both the pro-Ukrainian and the pro-Russian side sides seems to be similar: find content that may violate Facebook's rules and get as many people as possible to report the page. And indeed, reporting pages does work if the content actually is in breach of the standards. Ukrainian users have reported a concerted (and successful) effort to take down a pro-Russian group “Беркут—Украина (Berkut—Ukraine)” for content that contained threats of violence.

Allan acknowledges that if a page is popular and has a lot of followers, it's likely to fall under more scrutiny, and therefore, more likely to suffer if it is reported.

The system is designed to work that way: where content is important, and it's being seen by a lot of people, it's important that it meets the standards. So a really busy, active page with hate speech on it or incitement to violence is a problem. So we do want to see that content, review it, and, if necessary, take action against it.

Often, Facebook users report content simply because they're unhappy with it or don't agree with someone's point of view. This, according to Allen, is par for the course during political campaigns or other controversial circumstances.

[In any] situation where people feel quite partisan on one side or the other, it's a natural, normal behavior…If they feel strongly, if they disagree with something, they'll report it to us, just because they disagree. It's one way to express that, since we have no Dislike button on Facebook. We'll suddenly see a spike of reports and thousands of people reporting something. Could be a football match where a team does something they don't like, and they all go and report the Manchester United stuff.

When asked if users find other ways to game the system besides reporting content, especially in politically charged situations, Allen points to mass commenting as another widespread tactic.

There is a lot of activity in the form of organized comments from people on content they don't like. Someone will post something on a particular political situation, and rather than reporting, what you might see is a thousand comments appear very quickly, disagreeing with what is said. It's obvious that those people have coordinated or organized between themselves to post those thousand comments. But if they're real Facebook users expressing their real views, that's not something we feel we need to get involved in, as they're allowed to use the platform that way.

Technically, it would be against Facebook's rules, Allen says, for a government agency or anyone else to create groups of accounts just to start reporting things on Facebook. However, it's usually hard to prove that a government or an organization is behind accounts doing the mass commenting or reporting. As long as the individual accounts appear to be legitimate, the social media website is unlikely to investigate further or look for any proof of state sponsorship.

For many netizens, Facebook and websites like it have become indispensable spaces for free expression. They are also commercial entities, however, that strive to create an attractive space for all users and enforce their standards accordingly. Facebook makes it easy to report content that violates its norms, but the sheer volume of reports also means pages can be taken down by mistake. Facebook cares about authentic-user identity, but it might miss elaborate attempts to create “sock puppets” to manipulate the discussion around a given issue, like the conflict in Ukraine, by attempting to set a universal standard to define authenticity.

Allan allows that “human behavior is such that people will try and work around systems,” whether through impersonation, reporting, or by other means.

We're not going to say never, that people aren't going to find new ways to game the system, but our philosophy is if that's happening, we will try and identify those problems, and we'll try and change our systems to make sure that they are protecting against any kind of abuse.

In volatile situations, like the ongoing conflict involving Ukraine and Russia, social media platforms have an even greater responsibility to their users to try and prevent abuse of free expression. Documenting the cases of manipulation and attempts to game the system becomes important, granting special significance to the work of citizen journalists and bloggers who archive these cases and share them with the public. As in any community, the integrity of any social media ecosystem relies on all sides talking to—and listening to—each other.

6 comments

Then why is Berkut – Ukraine page still up? Funny how they mention people have complained about it but it was never taken down. Seems like the take down of pages is very one sided which is why people are complaining.